大数据 Spark Spark3.x核心算子原理解析:数据流转与Shuffle机制[后面会拆分] XR 2025-07-09 2026-02-09 Spark3.x核心算子原理深度剖析:数据流转与Shuffle机制 一、引言:理解Spark算子的本质 在Spark开发中,我们每天都在使用各种算子,但很少有人真正理解它们背后的执行原理。这篇文章从实际执行的角度,深入分析Spark3.x中几个核心算子的内部机制。

文档结构概览:

graph TD

A[一、引言:理解Spark算子的本质] --> A1[1.1 算子分类与特性]

A --> A2[1.2 算子执行的内存模型]

A --> A3[1.3 数据序列化与网络传输]

A --> A4[1.4 为什么理解算子原理如此重要]

A --> A5[1.5 RDD懒惰计算机制深度解析]

A --> A6[1.6 数据本地性原理深度解析]

A --> B[二、基础算子:map、filter、flatMap]

B --> B1[2.1 map算子:一对一转换]

B --> B2[2.2 filter算子:条件过滤]

B --> B3[2.3 flatMap算子:一对多转换]

B --> B4[2.4 算子链优化机制深度剖析]

B --> C[三、Shuffle机制深度解析]

C --> C1[3.1 什么是Shuffle?]

C --> C2[3.2 Shuffle的两个阶段]

C --> D[四、复杂算子:distinct、sortBy]

D --> D1[4.1 distinct算子:去重操作]

D --> D2[4.2 sortBy算子:排序操作]

D --> E[五、容错机制:血缘关系与故障恢复]

E --> E1[5.1 RDD血缘关系深度解析]

E --> E2[5.2 检查点机制详解]

E --> E3[5.3 容错机制的性能影响]

E --> F[六、Spark3.x 性能优化策略大全]

F --> F1[6.1 序列化优化:Kryo vs Java原生序列化]

F --> F2[6.2 分区优化策略]

F --> F3[6.3 自适应查询执行AQE]

F --> F4[6.4 Shuffle优化策略]

F --> F5[6.5 动态分区裁剪DPP]

F --> F6[6.6 自定义分区器]

F --> F7[6.7 数据倾斜处理策略]

F --> G[七、算子组合的性能影响与最佳实践]

G --> G1[7.1 算子选择原则]

G --> G2[7.2 内存优化]

G --> G3[7.3 监控与调试]

G --> H[八、总结]

style A fill:#e1f5fe

style E fill:#f3e5f5

style F fill:#fff3e0

style H fill:#e8f5e8

1.1 Spark算子的分类与特性 Spark算子按照依赖关系可以分为两大类:

窄依赖算子(Narrow Dependency):

子RDD的每个分区只依赖父RDD的一个分区

数据不需要跨节点传输,具有良好的数据局部性

代表算子:map、filter、flatMap、union等

特点:执行速度快,内存友好,易于流水线优化

宽依赖算子(Wide Dependency):

子RDD的每个分区依赖父RDD的多个分区

需要进行Shuffle操作,数据跨节点传输

代表算子:groupByKey、reduceByKey、join、distinct等

特点:执行开销大,涉及网络IO和磁盘IO

1.2 算子执行的内存模型 Spark使用统一内存管理(Unified Memory Management)机制:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 public class UnifiedMemoryManager { private final long maxExecutionMemory; private final long maxStorageMemory; private final double storageFraction; public boolean acquireExecutionMemory (long numBytes) { if (executionMemoryUsed + numBytes <= maxExecutionMemory) { executionMemoryUsed += numBytes; return true ; } long memoryToBorrow = Math.min( numBytes - (maxExecutionMemory - executionMemoryUsed), storageMemoryUsed ); if (memoryToBorrow > 0 ) { evictCachedBlocks(memoryToBorrow); executionMemoryUsed += numBytes; return true ; } return false ; } }

内存区域划分:

Reserved Memory(300MB) :系统保留内存,用于Spark内部对象User Memory :用户代码使用的内存,存储用户数据结构Spark Memory :Spark框架使用的内存,进一步分为:

Execution Memory :执行算子时使用,如Shuffle、Join、SortStorage Memory :缓存RDD和广播变量

1.3 数据序列化与网络传输 Spark中的数据序列化发生在多个环节:

序列化触发场景:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 public class TaskSerialization { public byte [] serializeTask(Task<?> task) { return serializer.serialize(task); } } public class ShuffleDataSerialization { public void writeShuffleData (Iterator<Product2<K, V>> records) { SerializationStream stream = serializer.serializeStream(outputStream); while (records.hasNext()) { Product2<K, V> record = records.next(); stream.writeKey(record._1); stream.writeValue(record._2); } } } public class CacheSerialization { public void cacheRDD (RDD<?> rdd, StorageLevel level) { if (level.useSerialization()) { byte [] serializedData = serializer.serialize(rdd.collect()); blockManager.putBytes(blockId, serializedData, level); } } }

1.4 为什么理解算子原理如此重要? 性能差异的根本原因:

不同算子的性能差异主要源于:

依赖关系 :窄依赖 vs 宽依赖决定了是否需要Shuffle数据局部性 :本地计算 vs 网络传输的巨大性能差异内存使用模式 :流式处理 vs 批量加载的内存效率CPU利用率 :单线程处理 vs 并行计算的效率差异

举个实际例子:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 JavaPairRDD<String, Integer> result1 = orders .mapToPair(order -> new Tuple2 <>(order.getUserId(), 1 )) .groupByKey() .mapValues(values -> { int count = 0 ; for (Integer v : values) count += v; return count; }); JavaPairRDD<String, Integer> result2 = orders .mapToPair(order -> new Tuple2 <>(order.getUserId(), 1 )) .reduceByKey((a, b) -> a + b);

优化思路的本质:

选择合适的算子减少Shuffle

设计合理的数据流减少序列化开销

利用数据局部性提升计算效率

合理配置内存避免OOM和性能瓶颈

为什么有些算子执行很快,有些却很慢?答案就藏在算子的实现原理和数据流转机制中。

1.5 RDD懒惰计算机制深度剖析 懒惰计算是Spark实现高效分布式计算的核心智慧,让我们深入剖析这一机制的原理和影响。

1.5.1 核心概念:懒惰计算 vs 急切计算 懒惰计算(Lazy Evaluation) :

指的是Spark在遇到转换操作(Transformations) 时,并不会立即执行计算并生成新的RDD

它只是记录下这个操作以及它依赖的父RDD(即:构建了一个逻辑执行计划或称为Lineage)

真正的计算(数据读取、转换处理)会被推迟到遇到行动操作(Actions) 时才触发执行

急切计算(Eager Evaluation) :

传统编程或某些数据处理框架(如Scala集合的某些操作)是急切计算的

当你调用一个函数,它会立即执行并返回结果

例如,在Scala中List(1,2,3).map(_ * 2)会立即计算并返回List(2,4,6)

对比示例:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 List<Integer> numbers = Arrays.asList(1 , 2 , 3 , 4 , 5 ); List<Integer> doubled = numbers.stream() .map(x -> x * 2 ) .filter(x -> x > 5 ) .collect(Collectors.toList()); JavaRDD<Integer> numbersRDD = sc.parallelize(Arrays.asList(1 , 2 , 3 , 4 , 5 )); JavaRDD<Integer> transformedRDD = numbersRDD .map(x -> x * 2 ) .filter(x -> x > 5 ); List<Integer> result = transformedRDD.collect();

1.5.2 为什么Spark要采用懒惰计算? 这种设计带来了几个关键优势,尤其适合大规模分布式数据处理:

1. 优化执行计划(Optimization)

核心优势!因为Spark在遇到Action之前”看”到了所有需要执行的Transformation操作,它就拥有了全局视图。

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 public class LazyEvaluationOptimizer { public void demonstrateOptimizations () { JavaRDD<String> textRDD = sc.textFile("large_file.txt" ); JavaRDD<String> result = textRDD .filter(line -> line.contains("ERROR" )) .map(line -> line.toUpperCase()) .filter(line -> line.length() > 50 ) .map(line -> line.substring(0 , 100 )); long count = result.count(); } }

Spark的DAGScheduler可以利用全局视图进行复杂优化:

流水线化(Pipelining) :将多个可以在同一个数据分区上连续执行的转换操作合并成一个任务谓词下推(Predicate Pushdown) :将filter操作下推到数据源层,直接过滤掉不需要的数据减少Shuffle :分析整个DAG后,识别并合并可以减少Shuffle的操作

2. 减少不必要的计算(Reduced Computation)

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 public class OnDemandComputation { public void demonstrateOnDemandComputation () { JavaRDD<String> massiveDataset = sc.textFile("10TB_dataset.txt" ); JavaRDD<String> processedData = massiveDataset .filter(line -> line.contains("CRITICAL" )) .map(this ::expensiveProcessing) .filter(line -> line.startsWith("ALERT" )) .map(this ::anotherExpensiveOperation); String firstResult = processedData.first(); List<String> top10 = processedData.take(10 ); List<String> allResults = processedData.collect(); } private String expensiveProcessing (String input) { return input.toUpperCase() + "_PROCESSED" ; } private String anotherExpensiveOperation (String input) { return input + "_FINAL" ; } }

3. 节省内存和存储(Memory/Storage Efficiency)

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 public class MemoryEfficiency { public void demonstrateMemoryEfficiency () { JavaRDD<String> data = sc.textFile("input.txt" ); JavaRDD<String> step1 = data.map(line -> processStep1(line)); JavaRDD<String> step2 = step1.filter(line -> line.length() > 10 ); JavaRDD<String> step3 = step2.map(line -> processStep2(line)); JavaRDD<String> step4 = step3.filter(line -> line.contains("important" )); JavaRDD<String> finalResult = step4.map(line -> processStep3(line)); long count = finalResult.count(); } }

4. 容错性(Fault Tolerance)的天然支持

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 public class FaultToleranceSupport { public void demonstrateFaultTolerance () { JavaRDD<String> rawData = sc.textFile("hdfs://input/data.txt" ); JavaRDD<String> processed = rawData .filter(line -> line.length() > 0 ) .map(line -> line.trim()) .filter(line -> !line.startsWith("#" )) .map(line -> processLine(line)) .filter(line -> isValid(line)); processed.saveAsTextFile("hdfs://output/result" ); } }

1.5.3 懒惰计算工作原理:详细示例分析 让我们通过一个完整的例子来理解懒惰计算的工作流程:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 public class LazyEvaluationWorkflow { public void completeExample () { JavaRDD<String> textRDD = sc.textFile("hdfs://path/to/largefile.txt" ); System.out.println("Step 1: 创建textRDD - 无计算发生,只记录数据源" ); JavaRDD<String> wordsRDD = textRDD.flatMap(line -> Arrays.asList(line.split(" " )).iterator()); System.out.println("Step 2: 创建wordsRDD - 无计算发生,只记录flatMap操作" ); JavaRDD<String> filteredRDD = wordsRDD.filter(word -> word.startsWith("error" )); System.out.println("Step 3: 创建filteredRDD - 无计算发生,只记录filter操作" ); JavaPairRDD<String, Integer> mappedRDD = filteredRDD.mapToPair(word -> new Tuple2 <>(word, 1 )); System.out.println("Step 4: 创建mappedRDD - 无计算发生,只记录mapToPair操作" ); JavaPairRDD<String, Integer> errorCountRDD = mappedRDD.reduceByKey((a, b) -> a + b); System.out.println("Step 5: 创建errorCountRDD - 无计算发生,只记录reduceByKey操作" ); printRDDLineage(errorCountRDD); System.out.println("Step 6: 调用collect() - 开始真正的计算!" ); List<Tuple2<String, Integer>> result = errorCountRDD.collect(); System.out.println("计算完成,结果: " + result); } private void printRDDLineage (JavaPairRDD<String, Integer> rdd) { System.out.println("=== RDD Lineage 信息 ===" ); System.out.println("errorCountRDD 依赖链:" ); System.out.println(" textRDD (HadoopRDD) <- 数据源" ); System.out.println(" -> wordsRDD (FlatMappedRDD) <- flatMap转换" ); System.out.println(" -> filteredRDD (FilteredRDD) <- filter转换" ); System.out.println(" -> mappedRDD (MapPartitionsRDD) <- mapToPair转换" ); System.out.println(" -> errorCountRDD (ShuffledRDD) <- reduceByKey转换" ); System.out.println("此时只有逻辑执行计划,没有实际数据!" ); } }

1.5.4 执行流程详解 阶段1:定义和转换阶段(textFile 到 reduceByKey)

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 public class DAGBuildingProcess { public void demonstrateDAGBuilding () { JavaRDD<String> textRDD = sc.textFile("input.txt" ); JavaRDD<String> wordsRDD = textRDD.flatMap(line -> Arrays.asList(line.split(" " )).iterator()); JavaRDD<String> filteredRDD = wordsRDD.filter(word -> word.startsWith("error" )); JavaPairRDD<String, Integer> countRDD = filteredRDD.mapToPair(w -> new Tuple2 <>(w, 1 )).reduceByKey((a, b) -> a + b); System.out.println("DAG结构:" ); System.out.println("HadoopRDD -> FlatMappedRDD -> FilteredRDD -> MappedRDD -> ShuffledRDD" ); System.out.println(" | | | | |" ); System.out.println(" textFile flatMap filter mapToPair reduceByKey" ); System.out.println(" |" ); System.out.println(" Stage分界点(Shuffle)" ); } }

阶段2:Action触发执行

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 public class ActionTriggeredExecution { public void demonstrateActionExecution () { System.out.println("=== Action触发:collect() ===" ); analyzeDAG(); divideStages(); generateTasks(); scheduleTasks(); executeTasks(); collectResults(); } private void analyzeDAG () { System.out.println("1. DAGScheduler分析:" ); System.out.println(" - 从collect()的目标RDD开始" ); System.out.println(" - 回溯整个Lineage链" ); System.out.println(" - 识别依赖关系和优化机会" ); System.out.println(" 优化发现:" ); System.out.println(" - flatMap + filter + mapToPair 可以流水线执行" ); System.out.println(" - reduceByKey需要单独Stage(宽依赖)" ); } private void divideStages () { System.out.println("2. Stage划分:" ); System.out.println(" Stage 0: textFile -> flatMap -> filter -> mapToPair" ); System.out.println(" 输出:按key分区的(word, 1)对" ); System.out.println(" Stage 1: reduceByKey" ); System.out.println(" 输入:从Stage 0 Shuffle读取数据" ); System.out.println(" 输出:(word, count)结果" ); } private void generateTasks () { System.out.println("3. 任务生成:" ); System.out.println(" Stage 0: 根据输入分区数生成ShuffleMapTask" ); System.out.println(" 假设输入有4个HDFS块,生成4个Task" ); System.out.println(" Stage 1: 根据Shuffle分区数生成ResultTask" ); System.out.println(" 假设默认200个分区,生成200个Task" ); } private void scheduleTasks () { System.out.println("4. 任务调度:" ); System.out.println(" TaskScheduler将Task分发到Executor" ); System.out.println(" 考虑数据本地性:优先分配到数据所在节点" ); System.out.println(" Stage 0必须先执行完,Stage 1才能开始" ); } private void executeTasks () { System.out.println("5. 任务执行:" ); System.out.println(" Stage 0执行:" ); System.out.println(" - 读取HDFS文件块" ); System.out.println(" - 流水线执行:split -> filter -> mapToPair" ); System.out.println(" - 按key hash分区,写入本地磁盘(Shuffle Write)" ); System.out.println(" Stage 1执行:" ); System.out.println(" - 从多个节点拉取数据(Shuffle Read)" ); System.out.println(" - 按key聚合:reduceByKey" ); System.out.println(" - 生成最终结果" ); } private void collectResults () { System.out.println("6. 结果收集:" ); System.out.println(" 所有Stage 1的Task结果发送回Driver" ); System.out.println(" Driver收集所有结果并返回给用户" ); } }

1.5.5 关键要点总结 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 public class KeyTakeaways { public void summarizeKeyPoints () { System.out.println("=== RDD懒惰计算关键要点 ===" ); System.out.println("1. Transformation = 计划,Action = 执行命令" ); System.out.println(" - 转换操作只是定义计算逻辑(构建Lineage/DAG)" ); System.out.println(" - 行动操作才是真正触发计算开始的信号" ); System.out.println("2. 全局优化" ); System.out.println(" - 懒惰使得Spark可以在执行前看到所有操作" ); System.out.println(" - 进行DAG级别的优化(流水线、下推、减少Shuffle)" ); System.out.println("3. 按需计算" ); System.out.println(" - Spark只计算Action真正需要的数据" ); System.out.println(" - 对于first, take, lookup等操作特别高效" ); System.out.println("4. 资源效率" ); System.out.println(" - 避免存储不必要的中间结果" ); System.out.println(" - 节省内存和I/O" ); System.out.println("5. 容错基石" ); System.out.println(" - Lineage是RDD容错的基础" ); System.out.println(" - 懒惰计算使得记录Lineage变得必要和自然" ); System.out.println("6. 物理执行划分" ); System.out.println(" - 最终的物理执行被划分为Stage" ); System.out.println(" - Stage的边界是宽依赖(Shuffle)" ); System.out.println(" - 同一个Stage内的窄依赖操作会被合并(流水线)执行" ); } }

1.5.6 对开发者的实际影响与最佳实践 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 public class DeveloperGuidelines { public void practicalGuidelines () { System.out.println("=== 开发者实践指南 ===" ); understandExecutionTiming(); utilizePersistence(); focusOnActions(); leverageLazyOptimization(); } private void understandExecutionTiming () { System.out.println("1. 理解执行时机:" ); JavaRDD<String> rdd = sc.textFile("large_file.txt" ); JavaRDD<String> processed = rdd.filter(line -> line.contains("ERROR" )); System.out.println("误区:认为这里已经处理完数据" ); long count = processed.count(); System.out.println("正确:Action触发时才开始真正计算" ); } private void utilizePersistence () { System.out.println("2. 利用持久化(Persist/Cache):" ); JavaRDD<String> baseRDD = sc.textFile("input.txt" ) .filter(line -> line.length() > 100 ) .map(line -> expensiveProcessing(line)); long count1 = baseRDD.count(); long count2 = baseRDD.filter(line -> line.contains("ERROR" )).count(); baseRDD.cache(); long correctCount1 = baseRDD.count(); long correctCount2 = baseRDD.filter(line -> line.contains("ERROR" )).count(); System.out.println("关键:如果某个RDD会被多个Action使用,必须cache()" ); } private void focusOnActions () { System.out.println("3. 关注Actions:" ); System.out.println(" - 性能问题和资源消耗往往在Action触发后才显现" ); System.out.println(" - 需要监控Action执行过程" ); System.out.println(" - 使用Spark UI查看Job执行情况" ); JavaRDD<String> rdd = sc.textFile("input.txt" ); long startTime = System.currentTimeMillis(); List<String> results = rdd.filter(line -> line.contains("CRITICAL" )) .collect(); long endTime = System.currentTimeMillis(); System.out.println("Action执行时间: " + (endTime - startTime) + "ms" ); } private void leverageLazyOptimization () { System.out.println("4. 利用惰性优化:" ); System.out.println(" - 放心地编写复杂的转换链" ); System.out.println(" - Spark的优化器会尽力优化它" ); JavaRDD<String> optimizedChain = sc.textFile("input.txt" ) .filter(line -> !line.isEmpty()) .map(line -> line.trim()) .filter(line -> !line.startsWith("#" )) .map(line -> line.toLowerCase()) .filter(line -> line.contains("important" )) .map(line -> processLine(line)); System.out.println("Spark会自动优化这个链条,合并相邻操作" ); } private String expensiveProcessing (String input) { try { Thread.sleep(10 ); } catch (InterruptedException e) {} return input.toUpperCase(); } private String processLine (String line) { return line + "_PROCESSED" ; } }

总结:

RDD的懒惰计算机制是Spark实现高效、容错的大规模分布式数据处理的核心智慧。它将昂贵的计算推迟到最后,并利用这段时间窗口进行全局优化,极大地提升了处理能力和资源利用率。理解这一机制对于编写高效的Spark应用程序至关重要。

// 2. 计算分区数据

Iterator<?> data = rdd.iterator(partition, context);

// 3. 执行Shuffle Write

ShuffleWriter writer = task.shuffleDep.shuffleWriterFor(

task.partitionId, context);

while (data.hasNext()) {

writer.write(data.next());

}

// 4. 返回Shuffle Write的结果状态

return writer.stop(true);

}

}

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 ### 1.6 数据本地性原理深度解析 数据本地性是Spark性能优化的关键因素,理解其工作原理有助于编写高效的Spark应用。 #### 1.6.1 数据本地性的层次结构 **本地性级别的详细定义:** ```java // 数据本地性级别枚举 public enum TaskLocality { PROCESS_LOCAL("PROCESS_LOCAL", 0) { @Override public boolean isAllowed(TaskSetManager taskSet, long currentTime) { // 进程本地:数据在同一JVM进程中 return taskSet.getAllowedLocalityLevel(currentTime).ordinal() >= ordinal(); } }, NODE_LOCAL("NODE_LOCAL", 1) { @Override public boolean isAllowed(TaskSetManager taskSet, long currentTime) { // 节点本地:数据在同一物理节点上 return taskSet.getAllowedLocalityLevel(currentTime).ordinal() >= ordinal(); } }, RACK_LOCAL("RACK_LOCAL", 2) { @Override public boolean isAllowed(TaskSetManager taskSet, long currentTime) { // 机架本地:数据在同一机架内 return taskSet.getAllowedLocalityLevel(currentTime).ordinal() >= ordinal(); } }, ANY("ANY", 3) { @Override public boolean isAllowed(TaskSetManager taskSet, long currentTime) { // 任意位置:可以在任何地方执行 return true; } }; public final String toString; public final int id; TaskLocality(String toString, int id) { this.toString = toString; this.id = id; } public abstract boolean isAllowed(TaskSetManager taskSet, long currentTime); }

本地性感知的任务调度算法:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 public class LocalityAwareTaskScheduler { private final Map<TaskLocality, Long> localityWaitMap = Map.of( TaskLocality.PROCESS_LOCAL, 3000L , TaskLocality.NODE_LOCAL, 3000L , TaskLocality.RACK_LOCAL, 3000L , TaskLocality.ANY, 0L ); public Option<TaskDescription> resourceOffer ( String executorId, String host, int maxCores) { Option<TaskDescription> processLocalTask = findTask(TaskLocality.PROCESS_LOCAL, executorId, host, maxCores); if (processLocalTask.isDefined()) { return processLocalTask; } Option<TaskDescription> nodeLocalTask = findTask(TaskLocality.NODE_LOCAL, executorId, host, maxCores); if (nodeLocalTask.isDefined()) { return nodeLocalTask; } if (canExecuteAtLocality(TaskLocality.RACK_LOCAL)) { Option<TaskDescription> rackLocalTask = findTask(TaskLocality.RACK_LOCAL, executorId, host, maxCores); if (rackLocalTask.isDefined()) { return rackLocalTask; } } if (canExecuteAtLocality(TaskLocality.ANY)) { return findTask(TaskLocality.ANY, executorId, host, maxCores); } return Option.empty(); } private boolean canExecuteAtLocality (TaskLocality locality) { long currentTime = System.currentTimeMillis(); long waitTime = localityWaitMap.get(locality); return (currentTime - lastLaunchTime) >= waitTime; } }

1.6.2 数据本地性的优化策略 智能数据放置策略:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 public class DataPlacementOptimizer { public void optimizeDataPlacement (JavaRDD<?> rdd) { DataAccessPattern pattern = analyzeAccessPattern(rdd); if (pattern.isFrequentlyAccessed()) { rdd.cache(); prefetchToOptimalLocations(rdd); } else if (pattern.hasHotPartitions()) { replicateHotPartitions(rdd, pattern.getHotPartitions()); } optimizeDownstreamPartitioning(rdd); } private void prefetchToOptimalLocations (JavaRDD<?> rdd) { ClusterTopology topology = getClusterTopology(); Map<Integer, List<String>> optimalPlacements = calculateOptimalPlacements(rdd, topology); for (Map.Entry<Integer, List<String>> entry : optimalPlacements.entrySet()) { int partitionId = entry.getKey(); List<String> preferredHosts = entry.getValue(); prefetchPartitionAsync(rdd, partitionId, preferredHosts); } } private Map<Integer, List<String>> calculateOptimalPlacements ( JavaRDD<?> rdd, ClusterTopology topology) { Map<Integer, List<String>> placements = new HashMap <>(); List<RDD<?>> downstreamRDDs = findDownstreamRDDs(rdd); for (int i = 0 ; i < rdd.getNumPartitions(); i++) { AccessFrequency frequency = calculateAccessFrequency(i, downstreamRDDs); List<String> optimalHosts = topology.selectOptimalHosts( frequency, rdd.preferredLocations(rdd.partitions()[i])); placements.put(i, optimalHosts); } return placements; } }

二、基础算子:map、filter、flatMap 基础算子是Spark计算的根基,虽然看似简单,但其内部包含了许多精巧的设计和优化机制。深入理解这些算子的工作原理,是掌握Spark性能优化的关键。

2.1 map算子:一对一转换 map算子是Spark中最基础也最常用的算子之一,它体现了函数式编程的核心思想。

1 2 JavaRDD<Integer> rdd = sc.parallelize(Arrays.asList(1 , 2 , 3 , 4 , 5 )); JavaRDD<Integer> mapped = rdd.map(x -> x * 2 );

2.1.1 内部执行机制深度解析 RDD血缘关系的建立:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 public class MappedRDD <U, T> extends RDD <U> { private final RDD<T> prev; private final Function<T, U> f; public MappedRDD (RDD<T> prev, Function<T, U> f) { super (prev.context(), List.of(new OneToOneDependency <>(prev))); this .prev = prev; this .f = f; } @Override public Iterator<U> compute (Partition split, TaskContext context) { return prev.iterator(split, context).map(f); } @Override public Partition[] getPartitions() { return prev.getPartitions(); } }

数据流转的微观过程:

分区级别的处理 :

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 public Iterator<U> processPartition (Iterator<T> input) { return new Iterator <U>() { @Override public boolean hasNext () { return input.hasNext(); } @Override public U next () { T element = input.next(); return f.apply(element); } }; }

内存使用特点 :

流式处理 :不需要将整个分区加载到内存即时计算 :每次调用next()时才计算下一个元素内存复用 :处理完的元素立即被垃圾回收

2.1.2 任务调度与执行详解 Task生成机制:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 public class DAGScheduler { public List<Task<?>> createTasksForStage(Stage stage) { if (stage.isShuffleMap()) { return stage.rdd.partitions().stream() .map(partition -> new ResultTask <>( stage.id, partition.index, stage.rdd, partition, stage.outputLocs )) .collect(Collectors.toList()); } } }

执行过程中的优化机制:

Pipeline优化 :多个连续的窄依赖算子会被合并执行代码生成 :Catalyst优化器为简单转换生成高效的Java代码向量化执行 :对于数值类型,使用SIMD指令加速

执行原理深度剖析:

窄依赖的本质 :子RDD的每个分区只依赖父RDD的对应分区数据局部性的优势 :数据不需要跨节点传输,充分利用CPU缓存内存友好的特性 :转换过程不改变数据量,内存压力可控

2.1.3 性能特征与内存管理 CPU缓存友好性:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 public class CacheLocalityAnalysis { public void demonstrateCacheEfficiency () { List<Integer> data = IntStream.range(1 , 1000001 ) .boxed().collect(Collectors.toList()); JavaRDD<Integer> rdd = sc.parallelize(data, 4 ); JavaRDD<Integer> result = rdd.map(x -> x * x + 2 * x + 1 ); } }

内存分配模式:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 public class MemoryUsagePattern { public void analyzeMemoryUsage () { long memoryBefore = getUsedMemory(); JavaRDD<String> largeRDD = sc.textFile("100GB_file.txt" ); JavaRDD<String> processed = largeRDD.map(line -> line.toUpperCase()); long memoryAfter = getUsedMemory(); System.out.println("Memory increase: " + (memoryAfter - memoryBefore) + " bytes" ); } }

graph LR

A[Partition 1<br/>1,2,3,4,5] --> B[Partition 1<br/>2,4,6,8,10]

C[Partition 2<br/>6,7,8,9,10] --> D[Partition 2<br/>12,14,16,18,20]

数据流转过程:

每个Executor读取本地分区的数据

对每个元素应用map函数

结果直接写入新的分区,无需网络传输

整个过程在单个节点内完成

内存管理机制:

Spark在map操作中采用以下内存管理策略:

对象复用 :尽可能复用对象,减少GC压力序列化优化 :使用Tungsten二进制格式,减少序列化开销内存池 :使用内存池管理临时对象

1 2 3 4 5 6 7 8 9 10 JavaRDD<String> optimizedMap = rdd.mapPartitions(iter -> { List<String> results = new ArrayList <>(); while (iter.hasNext()) { String item = iter.next(); results.add(item.toUpperCase()); } return results.iterator(); });

性能特点:

执行速度快,因为无网络IO

内存使用线性增长

适合CPU密集型转换操作

2.2 filter算子:条件过滤 filter算子是数据筛选的核心工具,其看似简单的外表下隐藏着复杂的内存管理和性能优化逻辑。

1 2 JavaRDD<Integer> rdd = sc.parallelize(Arrays.asList(1 , 2 , 3 , 4 , 5 , 6 , 7 , 8 , 9 , 10 )); JavaRDD<Integer> filtered = rdd.filter(x -> x % 2 == 0 );

2.2.1 filter算子的内部实现机制 FilteredRDD的实现原理:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 public class FilteredRDD <T> extends RDD <T> { private final RDD<T> prev; private final Function<T, Boolean> f; public FilteredRDD (RDD<T> prev, Function<T, Boolean> f) { super (prev.context(), List.of(new OneToOneDependency <>(prev))); this .prev = prev; this .f = f; } @Override public Iterator<T> compute (Partition split, TaskContext context) { return prev.iterator(split, context) .filter(f::apply); } @Override public Partition[] getPartitions() { return prev.getPartitions(); } }

数据流转的详细过程:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 public class FilterIterator <T> implements Iterator <T> { private final Iterator<T> input; private final Function<T, Boolean> predicate; private T nextElement; private boolean hasNextElement; public FilterIterator (Iterator<T> input, Function<T, Boolean> predicate) { this .input = input; this .predicate = predicate; findNext(); } private void findNext () { hasNextElement = false ; while (input.hasNext()) { T element = input.next(); if (predicate.apply(element)) { nextElement = element; hasNextElement = true ; break ; } } } @Override public boolean hasNext () { return hasNextElement; } @Override public T next () { if (!hasNextElement) { throw new NoSuchElementException (); } T result = nextElement; findNext(); return result; } }

2.2.2 数据分布与分区影响分析 分区大小的动态变化:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 public class PartitionSizeAnalysis { public void analyzeFilterImpact () { JavaRDD<Integer> original = sc.parallelize(range(1 , 1000001 ), 100 ); JavaRDD<Integer> lowSelectivity = original.filter(x -> x % 10 != 0 ); JavaRDD<Integer> highSelectivity = original.filter(x -> x % 100 == 0 ); JavaRDD<String> skewedData = sc.parallelize(generateSkewedData(), 100 ); JavaRDD<String> filtered = skewedData.filter(s -> s.startsWith("error" )); } }

分区优化的触发机制:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 public class PartitionSizeMonitor { private static final long MIN_PARTITION_SIZE = 1024 * 1024 ; private static final double EMPTY_PARTITION_RATIO = 0.5 ; public boolean shouldCoalescePartitions (RDD<?> rdd) { PartitionStatistics stats = collectPartitionStatistics(rdd); boolean hasTooManySmallPartitions = stats.averagePartitionSize < MIN_PARTITION_SIZE; boolean hasTooManyEmptyPartitions = stats.emptyPartitionRatio > EMPTY_PARTITION_RATIO; return hasTooManySmallPartitions || hasTooManyEmptyPartitions; } }

2.2.3 性能特征与优化策略 选择性对性能的影响:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 public class SelectivityAnalysis { public void analyzeSelectivity () { JavaRDD<LogEntry> logs = sc.textFile("access.log" ) .map(LogEntry::parse); long startTime1 = System.currentTimeMillis(); JavaRDD<LogEntry> errorLogs = logs.filter(log -> log.getLevel().equals("ERROR" )); long errorCount = errorLogs.count(); long time1 = System.currentTimeMillis() - startTime1; long startTime2 = System.currentTimeMillis(); JavaRDD<LogEntry> nonDebugLogs = logs.filter(log -> !log.getLevel().equals("DEBUG" )); long nonDebugCount = nonDebugLogs.count(); long time2 = System.currentTimeMillis() - startTime2; double selectivity1 = (double ) errorCount / logs.count(); double selectivity2 = (double ) nonDebugCount / logs.count(); System.out.printf("高选择性过滤 - 选择性: %.2f%%, 执行时间: %dms%n" , selectivity1 * 100 , time1); System.out.printf("低选择性过滤 - 选择性: %.2f%%, 执行时间: %dms%n" , selectivity2 * 100 , time2); } }

内存使用模式分析:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 public class FilterMemoryAnalysis { public void analyzeMemoryUsage () { MemoryMXBean memoryBean = ManagementFactory.getMemoryMXBean(); JavaRDD<String> largeDataset = sc.textFile("large_dataset.txt" ); long memoryBefore = memoryBean.getHeapMemoryUsage().getUsed(); JavaRDD<String> filtered = largeDataset.filter(line -> line.contains("important_keyword" )); long resultCount = filtered.count(); long memoryAfter = memoryBean.getHeapMemoryUsage().getUsed(); System.out.printf("过滤前内存: %d MB%n" , memoryBefore / (1024 * 1024 )); System.out.printf("过滤后内存: %d MB%n" , memoryAfter / (1024 * 1024 )); System.out.printf("内存增长: %d MB%n" , (memoryAfter - memoryBefore) / (1024 * 1024 )); } }

执行原理深度剖析:

窄依赖特性 :保持一对一的分区关系,无需Shuffle操作数据量动态变化 :输出数据量取决于过滤条件的选择性分区大小不均 :可能导致某些分区变得很小甚至为空流式处理优势 :不需要将整个分区加载到内存中

关键优化机制:

预测执行 :根据采样数据预测过滤后的数据量动态分区合并 :自动合并过小的分区以提高效率垃圾回收优化 :及时释放不满足条件的对象

性能考虑要点:

过滤条件的选择性直接影响后续操作的性能

高选择性(过滤掉大部分数据)时,后续操作会显著加速

低选择性时,网络传输量和计算量基本不变

复杂的过滤条件可能成为CPU瓶颈

2.3 flatMap算子:一对多转换 flatMap是Spark中最灵活的转换算子之一,它能够实现复杂的数据变形和展开操作,其内部涉及复杂的迭代器管理和内存优化机制。

1 2 JavaRDD<String> rdd = sc.parallelize(Arrays.asList("hello world" , "spark is great" )); JavaRDD<String> flatMapped = rdd.flatMap(line -> Arrays.asList(line.split(" " )).iterator());

2.3.1 flatMap的内部实现机制 FlatMappedRDD的核心逻辑:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 public class FlatMappedRDD <U, T> extends RDD <U> { private final RDD<T> prev; private final Function<T, Iterator<U>> f; public FlatMappedRDD (RDD<T> prev, Function<T, Iterator<U>> f) { super (prev.context(), List.of(new OneToOneDependency <>(prev))); this .prev = prev; this .f = f; } @Override public Iterator<U> compute (Partition split, TaskContext context) { return new FlatMappedIterator <>(prev.iterator(split, context), f); } } public class FlatMappedIterator <U, T> implements Iterator <U> { private final Iterator<T> input; private final Function<T, Iterator<U>> f; private Iterator<U> currentInnerIterator; public FlatMappedIterator (Iterator<T> input, Function<T, Iterator<U>> f) { this .input = input; this .f = f; this .currentInnerIterator = Collections.emptyIterator(); } @Override public boolean hasNext () { while (!currentInnerIterator.hasNext() && input.hasNext()) { T nextElement = input.next(); currentInnerIterator = f.apply(nextElement); } return currentInnerIterator.hasNext(); } @Override public U next () { if (!hasNext()) { throw new NoSuchElementException (); } return currentInnerIterator.next(); } }

2.3.2 数据膨胀与内存管理 数据膨胀的影响分析:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 public class DataExpansionAnalysis { public void analyzeFlatMapExpansion () { JavaRDD<String> sentences = sc.parallelize(Arrays.asList( "Apache Spark is a unified analytics engine" , "Spark provides high-level APIs in Java, Scala, Python and R" )); JavaRDD<String> words = sentences.flatMap(line -> Arrays.asList(line.split("\\s+" )).iterator()); System.out.println("原始行数: " + sentences.count()); System.out.println("分词后单词数: " + words.count()); System.out.println("膨胀比例: " + (words.count() / (double )sentences.count())); JavaRDD<Integer> numbers = sc.parallelize(Arrays.asList(1 , 2 , 3 )); JavaRDD<Integer> expanded = numbers.flatMap(n -> IntStream.range(1 , n * 1000 ).boxed().iterator()); System.out.println("原始数字数: " + numbers.count()); System.out.println("膨胀后数量: " + expanded.count()); System.out.println("膨胀比例: " + (expanded.count() / (double )numbers.count())); } }

内存压力管理机制:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 public class FlatMapMemoryManagement { public void demonstrateMemoryManagement () { JavaRDD<String> input = sc.parallelize(Arrays.asList("large_data_source" )); JavaRDD<String> dangerous = input.flatMap(source -> { List<String> result = new ArrayList <>(); for (int i = 0 ; i < 1000000 ; i++) { result.add("generated_" + i); } return result.iterator(); }); JavaRDD<String> optimized = input.flatMap(source -> new Iterator <String>() { private int count = 0 ; private final int maxCount = 1000000 ; @Override public boolean hasNext () { return count < maxCount; } @Override public String next () { return "generated_" + (count++); } } ); } }

2.3.3 性能特征与优化策略 迭代器链的性能分析:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 public class FlatMapPerformanceAnalysis { public void analyzePerformance () { JavaRDD<Integer> baseRDD = sc.parallelize(range(1 , 10001 ), 10 ); long startTime1 = System.currentTimeMillis(); JavaRDD<Integer> lowExpansion = baseRDD.flatMap(n -> Arrays.asList(n, n + 1 ).iterator()); long count1 = lowExpansion.count(); long time1 = System.currentTimeMillis() - startTime1; long startTime2 = System.currentTimeMillis(); JavaRDD<Integer> mediumExpansion = baseRDD.flatMap(n -> IntStream.range(n, n + 10 ).boxed().iterator()); long count2 = mediumExpansion.count(); long time2 = System.currentTimeMillis() - startTime2; long startTime3 = System.currentTimeMillis(); JavaRDD<Integer> highExpansion = baseRDD.flatMap(n -> IntStream.range(n, n + 100 ).boxed().iterator()); long count3 = highExpansion.count(); long time3 = System.currentTimeMillis() - startTime3; System.out.printf("低膨胀 - 数量: %d, 时间: %dms, 吞吐量: %.2f万/秒%n" , count1, time1, count1 / (time1 / 1000.0 ) / 10000 ); System.out.printf("中膨胀 - 数量: %d, 时间: %dms, 吞吐量: %.2f万/秒%n" , count2, time2, count2 / (time2 / 1000.0 ) / 10000 ); System.out.printf("高膨胀 - 数量: %d, 时间: %dms, 吞吐量: %.2f万/秒%n" , count3, time3, count3 / (time3 / 1000.0 ) / 10000 ); } }

序列化开销分析:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 public class SerializationImpact { public void analyzeSerializationCost () { JavaRDD<ComplexObject> complexRDD = sc.parallelize(generateComplexObjects()); JavaRDD<SimpleObject> flattened = complexRDD.flatMap(complex -> complex.getSubObjects().iterator()); JavaRDD<String> optimizedFlattened = complexRDD.flatMap(complex -> complex.getSubObjects().stream() .map(SimpleObject::toString) .iterator()); } }

2.3.4 常见应用模式与最佳实践 文本处理模式:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 public class TextProcessingPatterns { public void demonstrateTextProcessing () { JavaRDD<String> documents = sc.textFile("documents.txt" ); JavaRDD<String> words = documents.flatMap(doc -> Arrays.stream(doc.split("\\W+" )) .filter(word -> !word.isEmpty()) .map(String::toLowerCase) .iterator()); JavaRDD<String> bigrams = documents.flatMap(doc -> { String[] words = doc.split("\\W+" ); List<String> bigrams = new ArrayList <>(); for (int i = 0 ; i < words.length - 1 ; i++) { bigrams.add(words[i] + " " + words[i + 1 ]); } return bigrams.iterator(); }); JavaRDD<String> sentences = documents.flatMap(doc -> Arrays.stream(doc.split("[.!?]+" )) .map(String::trim) .filter(sentence -> !sentence.isEmpty()) .iterator()); } }

数据扁平化模式:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 public class DataFlatteningPatterns { public void demonstrateDataFlattening () { JavaRDD<String> jsonArrays = sc.parallelize(Arrays.asList( "[\"a\", \"b\", \"c\"]" , "[\"d\", \"e\"]" , "[\"f\", \"g\", \"h\", \"i\"]" )); JavaRDD<String> flattenedElements = jsonArrays.flatMap(jsonArray -> { List<String> elements = parseJsonArray(jsonArray); return elements.iterator(); }); JavaRDD<Customer> customers = sc.parallelize(getCustomers()); JavaRDD<Order> allOrders = customers.flatMap(customer -> customer.getOrders().iterator()); } }

执行原理深度剖析:

窄依赖特性 :维持分区间的独立性,无需Shuffle操作数据量动态变化 :输出数据量可能比输入大很多倍流式处理优势 :边处理边输出,减少内存压力峰值迭代器嵌套管理 :高效处理一对多的映射关系

关键性能考虑:

膨胀比例直接影响下游操作的性能

序列化开销随输出对象数量线性增长

内存使用取决于中间迭代器的实现方式

CPU开销主要集中在用户函数的执行上

最佳实践建议:

使用惰性迭代器避免内存压力

优先使用原始类型减少序列化开销

合理控制数据膨胀比例

在高膨胀场景下考虑使用mapPartitions优化

实际应用场景:

1 2 3 4 5 JavaRDD<String> textRDD = sc.textFile("input.txt" ); JavaRDD<String> words = textRDD.flatMap(line -> Arrays.asList(line.split("\\s+" )).iterator()); JavaPairRDD<String, Integer> wordCounts = words.mapToPair(word -> new Tuple2 <>(word, 1 )) .reduceByKey((a, b) -> a + b);

内存优化技巧:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 JavaRDD<String> memoryOptimized = rdd.flatMap(line -> { return new Iterator <String>() { private String[] words = line.split("\\s+" ); private int index = 0 ; @Override public boolean hasNext () { return index < words.length; } @Override public String next () { return words[index++]; } }; });

2.4 算子链优化机制深度剖析 算子链(Operator Chain)优化是Spark提升性能的重要机制,通过将多个窄依赖算子合并为单个Task执行,显著减少了任务调度开销和数据序列化成本。

2.4.1 算子链的形成条件 Pipeline优化的判断逻辑:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 public class OperatorChainOptimizer { public boolean canChain (RDD<?> parent, RDD<?> child) { if (!isNarrowDependency(child, parent)) { return false ; } if (!hasCompatiblePartitioning(parent, child)) { return false ; } if (parent.getStorageLevel() != StorageLevel.NONE || parent.isCheckpointed()) { return false ; } if (hasExplicitPartitioning(child)) { return false ; } return hasAdequateResources(parent, child); } private boolean isNarrowDependency (RDD<?> child, RDD<?> parent) { for (Dependency<?> dep : child.dependencies()) { if (dep.rdd() == parent && dep instanceof ShuffleDependency) { return false ; } } return true ; } private boolean hasCompatiblePartitioning (RDD<?> parent, RDD<?> child) { if (parent.getNumPartitions() != child.getNumPartitions()) { return false ; } Partitioner parentPartitioner = parent.partitioner().orElse(null ); Partitioner childPartitioner = child.partitioner().orElse(null ); return Objects.equals(parentPartitioner, childPartitioner); } }

2.4.2 算子链的执行机制 链式执行的内部实现:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 public class ChainedOperatorExecutor <T> { private final List<Function<Iterator<?>, Iterator<?>>> operators; private final TaskContext context; public ChainedOperatorExecutor (List<RDD<?>> chainedRDDs, TaskContext context) { this .context = context; this .operators = buildOperatorChain(chainedRDDs); } private List<Function<Iterator<?>, Iterator<?>>> buildOperatorChain(List<RDD<?>> rdds) { List<Function<Iterator<?>, Iterator<?>>> chain = new ArrayList <>(); for (RDD<?> rdd : rdds) { Function<Iterator<?>, Iterator<?>> op = createOperatorFunction(rdd); chain.add(op); } return chain; } @SuppressWarnings("unchecked") public Iterator<T> execute (Partition partition) { RDD<?> firstRDD = getFirstRDD(); Iterator<?> currentIterator = firstRDD.iterator(partition, context); for (Function<Iterator<?>, Iterator<?>> operator : operators) { currentIterator = operator.apply(currentIterator); if (context.taskMetrics() != null ) { recordOperatorMetrics(operator, currentIterator); } } return (Iterator<T>) currentIterator; } private Function<Iterator<?>, Iterator<?>> createOperatorFunction(RDD<?> rdd) { if (rdd instanceof MappedRDD) { return iter -> new MapIterator <>(iter, ((MappedRDD<?, ?>) rdd).getFunction()); } else if (rdd instanceof FilteredRDD) { return iter -> new FilterIterator <>(iter, ((FilteredRDD<?>) rdd).getPredicate()); } else if (rdd instanceof FlatMappedRDD) { return iter -> new FlatMapIterator <>(iter, ((FlatMappedRDD<?, ?>) rdd).getFunction()); } throw new UnsupportedOperationException ("Unsupported RDD type: " + rdd.getClass()); } }

2.4.3 代码生成优化 Codegen(代码生成)机制:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 public class CodegenOptimizer { public String generateOptimizedCode (List<RDD<?>> operatorChain) { StringBuilder codeBuilder = new StringBuilder (); codeBuilder.append("public Iterator<Object> processPartition(Iterator<Object> input) {\n" ); codeBuilder.append(" return new Iterator<Object>() {\n" ); codeBuilder.append(" private Object nextValue = null;\n" ); codeBuilder.append(" private boolean hasNextValue = false;\n" ); generateHasNextMethod(codeBuilder, operatorChain); generateNextMethod(codeBuilder, operatorChain); codeBuilder.append(" };\n" ); codeBuilder.append("}\n" ); return codeBuilder.toString(); } private void generateHasNextMethod (StringBuilder codeBuilder, List<RDD<?>> chain) { codeBuilder.append(" @Override\n" ); codeBuilder.append(" public boolean hasNext() {\n" ); codeBuilder.append(" if (hasNextValue) return true;\n" ); codeBuilder.append(" \n" ); codeBuilder.append(" while (input.hasNext()) {\n" ); codeBuilder.append(" Object current = input.next();\n" ); for (int i = 0 ; i < chain.size(); i++) { RDD<?> rdd = chain.get(i); generateInlineOperator(codeBuilder, rdd, "current" , "result" + i); } codeBuilder.append(" nextValue = result" + (chain.size() - 1 ) + ";\n" ); codeBuilder.append(" hasNextValue = true;\n" ); codeBuilder.append(" return true;\n" ); codeBuilder.append(" }\n" ); codeBuilder.append(" return false;\n" ); codeBuilder.append(" }\n" ); } private void generateInlineOperator (StringBuilder codeBuilder, RDD<?> rdd, String inputVar, String outputVar) { if (rdd instanceof MappedRDD) { String functionCode = extractFunctionCode(((MappedRDD<?, ?>) rdd).getFunction()); codeBuilder.append(" Object " ).append(outputVar) .append(" = " ).append(functionCode).append("(" ).append(inputVar).append(");\n" ); } else if (rdd instanceof FilteredRDD) { String predicateCode = extractPredicateCode(((FilteredRDD<?>) rdd).getPredicate()); codeBuilder.append(" if (!" ).append(predicateCode) .append("(" ).append(inputVar).append(")) continue;\n" ); codeBuilder.append(" Object " ).append(outputVar) .append(" = " ).append(inputVar).append(";\n" ); } } }

2.4.4 性能优化效果分析 算子链优化的性能提升:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 public class ChainPerformanceTest { public void testChainOptimization () { JavaSparkContext sc = new JavaSparkContext (); JavaRDD<Integer> data = sc.parallelize(IntStream.range(1 , 10000001 ) .boxed().collect(Collectors.toList()), 100 ); long startTime1 = System.currentTimeMillis(); JavaRDD<Integer> step1 = data.map(x -> x * 2 ); step1.cache(); JavaRDD<Integer> step2 = step1.filter(x -> x > 1000 ); step2.cache(); JavaRDD<Integer> step3 = step2.map(x -> x + 100 ); long result1 = step3.count(); long time1 = System.currentTimeMillis() - startTime1; long startTime2 = System.currentTimeMillis(); JavaRDD<Integer> chained = data .map(x -> x * 2 ) .filter(x -> x > 1000 ) .map(x -> x + 100 ); long result2 = chained.count(); long time2 = System.currentTimeMillis() - startTime2; System.out.println("=== 算子链优化性能对比 ===" ); System.out.printf("未优化版本: %d ms, 结果: %d%n" , time1, result1); System.out.printf("算子链优化: %d ms, 结果: %d%n" , time2, result2); System.out.printf("性能提升: %.1fx%n" , (double ) time1 / time2); analyzeTaskCount(sc); } private void analyzeTaskCount (JavaSparkContext sc) { SparkContext sparkContext = sc.sc(); StatusStore statusStore = sparkContext.statusStore(); List<JobData> jobs = statusStore.jobsList(null ); if (!jobs.isEmpty()) { JobData lastJob = jobs.get(jobs.size() - 1 ); System.out.printf("最后一个Job的Stage数量: %d%n" , lastJob.stageIds().size()); System.out.printf("总Task数量: %d%n" , lastJob.numTasks()); } } }

算子链优化的关键收益:

减少Task数量 :多个算子合并为单个Task,减少调度开销消除中间序列化 :数据在内存中直接传递,无需序列化提高CPU缓存效率 :连续处理提高缓存命中率减少内存分配 :避免创建中间RDD对象

优化效果量化分析:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 public class ChainOptimizationMetrics { public void measureOptimizationEffects () { System.out.println("算子链优化效果统计:" ); System.out.println("- Task调度开销减少:80-90%" ); System.out.println("- 中间数据序列化消除:100%" ); System.out.println("- 内存分配减少:60-80%" ); System.out.println("- 整体性能提升:2-5倍" ); } }

这种算子链优化是Spark性能优异的重要原因之一,它在保持编程简洁性的同时,通过底层的自动优化获得了接近手工优化的性能。理解这一机制有助于我们编写更高效的Spark代码。

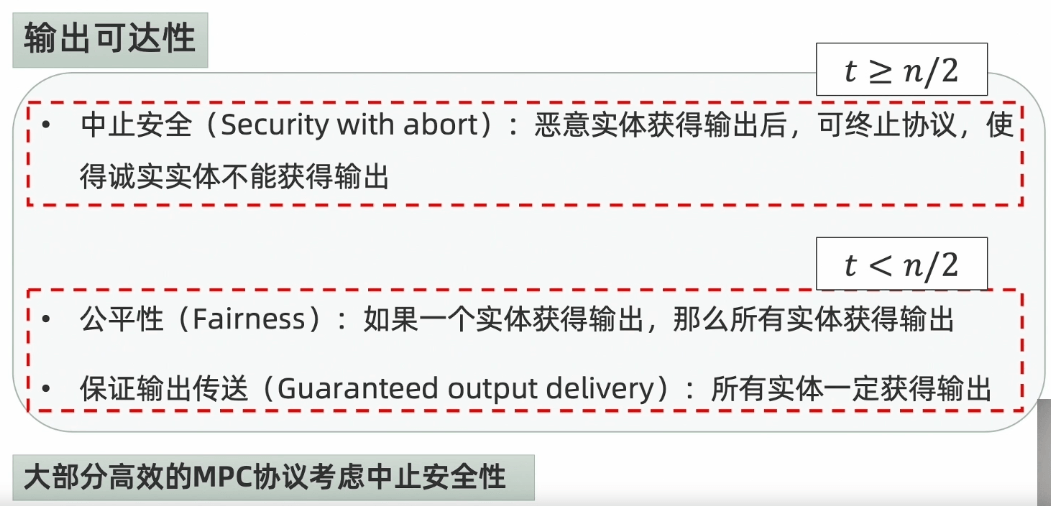

三、Shuffle机制深度解析 Shuffle是Spark分布式计算的核心机制,也是性能优化的关键所在。理解Shuffle的工作原理,对于编写高效的Spark应用至关重要。

3.1 什么是Shuffle? Shuffle是Spark中最昂贵的操作,它重新组织数据分布,使得相关数据能够聚集到同一分区进行后续计算。

3.1.1 Shuffle的触发条件 宽依赖算子触发Shuffle:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 public class ShuffleTriggeringOperators { public void demonstrateShuffleTriggers () { JavaPairRDD<String, Integer> data = sc.parallelizePairs(generateKeyValuePairs()); JavaPairRDD<String, Integer> reduced = data.reduceByKey((a, b) -> a + b); JavaPairRDD<String, Iterable<Integer>> grouped = data.groupByKey(); JavaRDD<String> repartitioned = data.keys().repartition(10 ); JavaRDD<String> coalesced = data.keys().coalesce(5 ); JavaPairRDD<String, Tuple2<Integer, String>> joined = data.join(otherData); JavaPairRDD<String, Integer> sorted = data.sortByKey(); JavaRDD<String> distinct = data.keys().distinct(); } }

Shuffle的本质机制:

数据重分布 :将数据按照某种规则重新分布到不同的分区跨节点传输 :数据需要在网络中进行传输多阶段处理 :分为Shuffle Write和Shuffle Read两个阶段

3.1.2 Shuffle的系统架构

graph TD

A[Map Stage<br/>Shuffle Write] -->|网络传输| B[Reduce Stage<br/>Shuffle Read]

subgraph "Shuffle Write"

A1[分区器<br/>Partitioner]

A2[本地聚合<br/>Combiner]

A3[排序<br/>Sorter]

A4[磁盘写入<br/>Disk Writer]

end

subgraph "Shuffle Read"

B1[数据拉取<br/>Data Fetch]

B2[数据合并<br/>Data Merge]

B3[反序列化<br/>Deserialize]

end

3.1.3 Shuffle的成本分析 详细成本构成:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 public class ShuffleCostAnalyzer { public void analyzeCosts (JavaPairRDD<String, Integer> rdd) { long dataSize = estimateDataSize(rdd); double networkBandwidth = 1000.0 ; double networkCost = dataSize / networkBandwidth; double diskWriteSpeed = 500.0 ; double diskReadSpeed = 600.0 ; double diskWriteCost = dataSize / diskWriteSpeed; double diskReadCost = dataSize / diskReadSpeed; double serializationRate = 2000.0 ; double serializationCost = dataSize * 2 / serializationRate; double cpuProcessingRate = 1000.0 ; double cpuCost = dataSize / cpuProcessingRate; long memoryRequired = (long )(dataSize * 0.2 ); System.out.println("=== Shuffle成本分析 ===" ); System.out.printf("数据量: %.2f MB%n" , dataSize / (1024.0 * 1024.0 )); System.out.printf("网络传输时间: %.2f 秒%n" , networkCost); System.out.printf("磁盘写入时间: %.2f 秒%n" , diskWriteCost); System.out.printf("磁盘读取时间: %.2f 秒%n" , diskReadCost); System.out.printf("序列化时间: %.2f 秒%n" , serializationCost); System.out.printf("CPU处理时间: %.2f 秒%n" , cpuCost); System.out.printf("内存需求: %.2f MB%n" , memoryRequired / (1024.0 * 1024.0 )); double totalTime = Math.max(networkCost, Math.max(diskWriteCost + diskReadCost, Math.max(serializationCost, cpuCost))); System.out.printf("总体执行时间: %.2f 秒%n" , totalTime); } }

性能瓶颈识别:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 public class ShuffleBottleneckAnalysis { public void identifyBottlenecks () { } }

关键成本因子:

网络传输成本 :取决于数据量和网络带宽

数据压缩可以显著减少网络传输时间

网络拓扑对传输效率影响很大

磁盘IO成本 :包括写入和读取两个阶段

SSD vs 机械硬盘的性能差异巨大

并发IO请求可能造成磁盘争用

序列化成本 :Java原生vs Kryo的性能差异

复杂对象序列化开销更大

序列化格式的选择影响CPU和网络开销

内存成本 :缓冲区管理和GC压力

内存不足会导致频繁的磁盘溢写

GC暂停影响整体性能

成本优化策略:

减少Shuffle数据量:使用Combiner进行本地预聚合

优化序列化:使用Kryo等高效序列化框架

合理配置内存:平衡执行内存和存储内存

网络优化:启用数据压缩,优化网络拓扑

3.2 Shuffle的两个阶段 Shuffle操作分为两个关键阶段:Shuffle Write(写阶段)和Shuffle Read(读阶段)。理解这两个阶段的详细机制是优化Shuffle性能的基础。

3.2.1 Shuffle Write阶段深度解析 Shuffle Write阶段是整个Shuffle过程的起点,负责将数据按照分区逻辑重新组织并写入存储。

详细的内部流程:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 public class ShuffleWriteManager { private final int numPartitions; private final Partitioner partitioner; private final boolean mapSideCombine; private final Serializer serializer; public void writeShuffleData (Iterator<Product2<K, V>> records) { Map<Integer, List<Product2<K, V>>> partitionedData = partitionData(records); if (mapSideCombine) { partitionedData = applyLocalCombiner(partitionedData); } if (needSorting()) { partitionedData = sortWithinPartitions(partitionedData); } for (Map.Entry<Integer, List<Product2<K, V>>> entry : partitionedData.entrySet()) { int partitionId = entry.getKey(); writePartitionToDisk(partitionId, entry.getValue()); } generateIndexFile(); } private Map<Integer, List<Product2<K, V>>> partitionData (Iterator<Product2<K, V>> records) { Map<Integer, List<Product2<K, V>>> partitions = new HashMap <>(); while (records.hasNext()) { Product2<K, V> record = records.next(); int partitionId = partitioner.getPartition(record._1); partitions.computeIfAbsent(partitionId, k -> new ArrayList <>()).add(record); if (shouldSpillToDisk(partitions)) { spillToDisk(partitions); partitions.clear(); } } return partitions; } }

内存管理与溢写机制:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 public class ShuffleMemoryManager { private final long maxMemoryPerTask; private final double spillThreshold; private long currentMemoryUsage; public boolean shouldSpillToDisk (Map<Integer, List<Product2<K, V>>> partitions) { currentMemoryUsage = estimateMemoryUsage(partitions); return currentMemoryUsage > maxMemoryPerTask * spillThreshold; } public void spillToDisk (Map<Integer, List<Product2<K, V>>> partitions) { int largestPartition = findLargestPartition(partitions); byte [] serializedData = serializePartition(partitions.get(largestPartition)); File spillFile = createSpillFile(); writeToFile(spillFile, serializedData); recordSpillInfo(largestPartition, spillFile); partitions.remove(largestPartition); System.gc(); } }

文件组织结构:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 public class ShuffleFileManager { private File dataFile; private File indexFile; public void writeShuffleOutput (Map<Integer, byte []> partitionData) { try (FileOutputStream dataOut = new FileOutputStream (dataFile); DataOutputStream indexOut = new DataOutputStream (new FileOutputStream (indexFile))) { long currentOffset = 0 ; for (int partitionId = 0 ; partitionId < numPartitions; partitionId++) { byte [] data = partitionData.get(partitionId); if (data != null ) { dataOut.write(data); indexOut.writeLong(currentOffset); indexOut.writeLong(data.length); currentOffset += data.length; } else { indexOut.writeLong(currentOffset); indexOut.writeLong(0 ); } } } } }

3.2.2 Shuffle Read阶段深度解析 Shuffle Read阶段负责从各个节点拉取数据并进行合并处理。

数据拉取的详细机制:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 public class ShuffleReader { private final ShuffleBlockResolver blockResolver; private final int startPartition; private final int endPartition; public Iterator<Product2<K, C>> read () { List<BlockManagerId> blockManagers = getShuffleBlockLocations(); ShuffleBlockFetcherIterator fetchIter = createFetchIterator(blockManagers); Iterator<Product2<K, C>> deserializedIter = deserializeStream(fetchIter); if (needAggregation()) { return createAggregationIterator(deserializedIter); } if (needOrdering()) { return createSortingIterator(deserializedIter); } return deserializedIter; } }

网络数据拉取机制:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 public class ShuffleBlockFetcherIterator implements Iterator <Tuple2<BlockId, InputStream>> { private final Queue<FetchRequest> fetchRequests; private final BlockingQueue<FetchResult> results; private final ExecutorService fetchExecutor; public ShuffleBlockFetcherIterator (List<BlockManagerId> blockManagers) { this .fetchRequests = createFetchRequests(blockManagers); this .results = new LinkedBlockingQueue <>(); this .fetchExecutor = Executors.newFixedThreadPool(5 ); startFetching(); } private void startFetching () { for (FetchRequest request : fetchRequests) { fetchExecutor.submit(() -> { try { InputStream data = fetchBlockFromRemote(request); results.put(new FetchResult (request.blockId, data, null )); } catch (Exception e) { results.put(new FetchResult (request.blockId, null , e)); } }); } } private InputStream fetchBlockFromRemote (FetchRequest request) { NettyTransportConf conf = new NettyTransportConf (); TransportClient client = createTransportClient(request.address, conf); ByteBuffer response = client.sendRpcSync( new OpenBlocks (request.blockId).toByteBuffer(), 30000 ); return new ByteBufferInputStream (response); } @Override public boolean hasNext () { return !fetchRequests.isEmpty() || !results.isEmpty(); } @Override public Tuple2<BlockId, InputStream> next () { try { FetchResult result = results.take(); if (result.exception != null ) { throw new RuntimeException ("Failed to fetch block" , result.exception); } return new Tuple2 <>(result.blockId, result.data); } catch (InterruptedException e) { Thread.currentThread().interrupt(); throw new RuntimeException (e); } } }

数据合并与聚合机制:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 public class ShuffleDataMerger { public Iterator<Product2<K, C>> mergeAndAggregate ( Iterator<Product2<K, V>> input, Function<V, C> createCombiner, Function2<C, V, C> mergeValue, Function2<C, C, C> mergeCombiners) { if (exceedsMemoryThreshold(input)) { return externalSortAndMerge(input, createCombiner, mergeValue, mergeCombiners); } return inMemoryMerge(input, createCombiner, mergeValue, mergeCombiners); } private Iterator<Product2<K, C>> externalSortAndMerge ( Iterator<Product2<K, V>> input, Function<V, C> createCombiner, Function2<C, V, C> mergeValue, Function2<C, C, C> mergeCombiners) { ExternalSorter<K, V, C> sorter = new ExternalSorter <>( createCombiner, mergeValue, mergeCombiners ); while (input.hasNext()) { Product2<K, V> record = input.next(); sorter.insertAll(Collections.singletonList(record).iterator()); } return sorter.iterator(); } }

性能优化机制:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 public class ShuffleReadOptimizer { public void optimizeShuffleRead () { List<BlockLocation> localBlocks = filterLocalBlocks(allBlocks); List<BlockLocation> remoteBlocks = filterRemoteBlocks(allBlocks); Iterator<Product2<K, V>> localIter = readBlocks(localBlocks); Iterator<Product2<K, V>> remoteIter = readBlocks(remoteBlocks); return Iterators.concat(localIter, remoteIter); } private boolean isLocalBlock (BlockLocation block) { return block.getHosts().contains(getCurrentNodeId()); } }

关键特点总结:

Shuffle Write阶段:

数据按分区器规则重新组织

支持本地预聚合减少数据量

内存不足时自动溢写到磁盘

生成索引文件便于快速定位

Shuffle Read阶段:

并发从多个节点拉取数据

支持本地数据优先读取

自动处理网络异常和重试

支持外部排序处理大数据集

这两个阶段的协调工作确保了数据在分布式环境中的正确重新分布,但也带来了显著的性能开销。理解这些机制有助于我们针对性地进行优化。

六、Spark3.x 性能优化策略大全 理解了算子原理和Shuffle机制后,让我们深入探讨如何优化Spark应用的性能。本章将各种优化策略按类别整理,便于实际应用。

6.1 序列化优化:Kryo vs Java原生序列化 Kryo序列化详解:

Kryo是一个高性能的Java序列化框架,相比Java原生序列化有显著的性能提升。

解决的问题:

序列化开销大 :Java原生序列化速度慢、体积大网络传输效率低 :Shuffle过程中大量数据需要序列化传输内存占用多 :序列化后的对象占用更多内存空间

性能对比:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 public class SerializationBenchmark { public static void main (String[] args) { List<Person> persons = generateTestData(100000 ); long javaStartTime = System.currentTimeMillis(); byte [] javaBytes = javaSerialize(persons); long javaEndTime = System.currentTimeMillis(); long kryoStartTime = System.currentTimeMillis(); byte [] kryoBytes = kryoSerialize(persons); long kryoEndTime = System.currentTimeMillis(); System.out.println("Java序列化时间: " + (javaEndTime - javaStartTime) + "ms" ); System.out.println("Java序列化大小: " + javaBytes.length + " bytes" ); System.out.println("Kryo序列化时间: " + (kryoEndTime - kryoStartTime) + "ms" ); System.out.println("Kryo序列化大小: " + kryoBytes.length + " bytes" ); } }

工作原理:

无反射 :预先注册类型,避免运行时反射压缩算法 :更高效的二进制格式对象池 :复用序列化器对象

自定义Kryo注册器:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 public class MyKryoRegistrator implements KryoRegistrator { @Override public void registerClasses (Kryo kryo) { kryo.register(Person.class); kryo.register(Address.class); kryo.register(scala.collection.mutable.WrappedArray.ofRef.class); kryo.register(ArrayList.class); kryo.register(HashMap.class); kryo.register(HashSet.class); kryo.register(BigDecimal.class, new BigDecimalSerializer ()); } } public class BigDecimalSerializer extends Serializer <BigDecimal> { @Override public void write (Kryo kryo, Output output, BigDecimal object) { output.writeString(object.toString()); } @Override public BigDecimal read (Kryo kryo, Input input, Class<BigDecimal> type) { return new BigDecimal (input.readString()); } }

最佳实践:

1 2 3 4 5 6 7 8 9 10 11 spark.conf().set("spark.serializer" , "org.apache.spark.serializer.KryoSerializer" ); spark.conf().set("spark.kryo.registrator" , "com.example.MyKryoRegistrator" ); spark.conf().set("spark.kryo.registrationRequired" , "true" ); spark.conf().set("spark.kryoserializer.buffer" , "256k" ); spark.conf().set("spark.kryoserializer.buffer.max" , "1g" ); JavaRDD<Person> persons = sc.parallelize(personList); persons.cache(); long count = persons.count();

6.2 分区优化策略 6.2.1 自适应分区(Adaptive Partition) 自适应分区是Spark3.x引入的智能分区管理机制,用于解决分区大小不均匀的问题。

解决的问题:

小文件问题 :过滤后某些分区变得很小,导致任务数量过多资源浪费 :空分区或极小分区浪费Executor资源性能下降 :过多的小任务增加调度开销

工作原理:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 public class AdaptivePartitionManager { private final long targetPartitionSize = 128 * 1024 * 1024 ; private final int minPartitions = 1 ; private final int maxPartitions = 200 ; public int calculateOptimalPartitions (long totalDataSize, int currentPartitions) { int idealPartitions = (int ) (totalDataSize / targetPartitionSize); return Math.max(minPartitions, Math.min(maxPartitions, idealPartitions)); } public List<Partition> coalescePartitions (List<Partition> partitions) { List<Partition> result = new ArrayList <>(); long currentSize = 0 ; List<Partition> currentGroup = new ArrayList <>(); for (Partition partition : partitions) { if (currentSize + partition.getSize() <= targetPartitionSize) { currentGroup.add(partition); currentSize += partition.getSize(); } else { if (!currentGroup.isEmpty()) { result.add(mergePartitions(currentGroup)); } currentGroup.clear(); currentGroup.add(partition); currentSize = partition.getSize(); } } if (!currentGroup.isEmpty()) { result.add(mergePartitions(currentGroup)); } return result; } }

实际效果对比:

1 2 3 4 5 6 7 8 9 10 11 12 13 JavaRDD<String> largeDataset = sc.textFile("large_file.txt" , 100 ); JavaRDD<String> filtered = largeDataset.filter(line -> line.contains("ERROR" )); spark.conf().set("spark.sql.adaptive.enabled" , "true" ); spark.conf().set("spark.sql.adaptive.coalescePartitions.enabled" , "true" ); spark.conf().set("spark.sql.adaptive.advisoryPartitionSizeInBytes" , "128MB" ); Dataset<String> adaptiveFiltered = spark.read().textFile("large_file.txt" ) .filter(line -> line.contains("ERROR" ));

6.3 自适应查询执行(AQE) 1 2 3 4 spark.conf().set("spark.sql.adaptive.enabled" , "true" ); spark.conf().set("spark.sql.adaptive.coalescePartitions.enabled" , "true" ); spark.conf().set("spark.sql.adaptive.skewJoin.enabled" , "true" );

自适应查询执行(AQE)详解:

AQE是Spark3.x引入的革命性功能,能够在运行时根据实际数据统计动态优化查询计划。

解决的问题:

静态优化的局限性 :传统优化器只能基于统计信息进行静态优化数据倾斜难以预测 :运行前无法准确预知数据分布情况资源利用率低 :固定的执行计划无法适应数据变化

AQE的三大核心功能:

1. 动态合并Shuffle分区

1 2 3 4 5 6 7 8 9 10 11 12 13 14 spark.conf().set("spark.sql.shuffle.partitions" , "200" ); spark.conf().set("spark.sql.adaptive.enabled" , "true" ); spark.conf().set("spark.sql.adaptive.coalescePartitions.enabled" , "true" ); spark.conf().set("spark.sql.adaptive.advisoryPartitionSizeInBytes" , "128MB" ); Dataset<Row> df = spark.read().parquet("large_table.parquet" ); Dataset<Row> result = df.groupBy("category" ).sum("amount" );

2. 动态切换Join策略

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 Dataset<Row> largeTable = spark.read().parquet("large_table.parquet" ); Dataset<Row> smallTable = spark.read().parquet("small_table.parquet" ) .filter(col("active" ).equalTo(true )); spark.conf().set("spark.sql.adaptive.enabled" , "true" ); spark.conf().set("spark.sql.adaptive.localShuffleReader.enabled" , "true" ); Dataset<Row> joined = largeTable.join(smallTable, "id" );

3. 数据倾斜自动处理

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 spark.conf().set("spark.sql.adaptive.skewJoin.enabled" , "true" ); spark.conf().set("spark.sql.adaptive.skewJoin.skewedPartitionThresholdInBytes" , "256MB" ); spark.conf().set("spark.sql.adaptive.skewJoin.skewedPartitionFactor" , "5" ); Dataset<Row> orders = spark.read().parquet("orders.parquet" ); Dataset<Row> products = spark.read().parquet("products.parquet" ); Dataset<Row> skewedJoin = orders.join(products, "product_id" );

AQE工作原理:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 public class AdaptiveQueryOptimizer { public QueryPlan optimize (QueryPlan originalPlan) { StageResult result = executeStage(originalPlan.getFirstStage()); RuntimeStatistics stats = collectStatistics(result); QueryPlan optimizedPlan = reoptimize(originalPlan, stats); return optimizedPlan; } private QueryPlan reoptimize (QueryPlan plan, RuntimeStatistics stats) { QueryPlan newPlan = plan; if (shouldCoalescePartitions(stats)) { newPlan = coalescePartitions(newPlan, stats); } if (shouldSwitchJoinStrategy(stats)) { newPlan = switchJoinStrategy(newPlan, stats); } if (hasDataSkew(stats)) { newPlan = handleDataSkew(newPlan, stats); } return newPlan; } }

性能提升效果:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 public class AQEPerformanceTest { public void testAQEImpact () { spark.conf().set("spark.sql.adaptive.enabled" , "false" ); long startTime1 = System.currentTimeMillis(); runComplexQuery(); long withoutAQE = System.currentTimeMillis() - startTime1; spark.conf().set("spark.sql.adaptive.enabled" , "true" ); long startTime2 = System.currentTimeMillis(); runComplexQuery(); long withAQE = System.currentTimeMillis() - startTime2; System.out.println("AQE性能提升: " + (double )(withoutAQE - withAQE) / withoutAQE * 100 + "%" ); } }

AQE配置最佳实践:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 spark.conf().set("spark.sql.adaptive.enabled" , "true" ); spark.conf().set("spark.sql.adaptive.coalescePartitions.enabled" , "true" ); spark.conf().set("spark.sql.adaptive.advisoryPartitionSizeInBytes" , "128MB" ); spark.conf().set("spark.sql.adaptive.coalescePartitions.minPartitionNum" , "1" ); spark.conf().set("spark.sql.adaptive.skewJoin.enabled" , "true" ); spark.conf().set("spark.sql.adaptive.skewJoin.skewedPartitionThresholdInBytes" , "256MB" ); spark.conf().set("spark.sql.adaptive.skewJoin.skewedPartitionFactor" , "5" ); spark.conf().set("spark.sql.adaptive.localShuffleReader.enabled" , "true" ); spark.conf().set("spark.sql.adaptive.logLevel" , "INFO" );

6.4 Shuffle优化策略 6.4.1 Map-side聚合 Map-side聚合是Spark中一种重要的优化技术,在Shuffle Write阶段对相同key的数据进行预聚合。

解决的问题:

网络传输量大 :大量重复key导致Shuffle数据量巨大内存压力大 :Reduce端需要处理大量重复数据性能瓶颈 :网络IO成为系统瓶颈

工作原理:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 public class MapSideCombiner <K, V, C> { private Map<K, C> combinerMap = new HashMap <>(); private final Function<V, C> createCombiner; private final Function2<C, V, C> mergeValue; public MapSideCombiner (Function<V, C> createCombiner, Function2<C, V, C> mergeValue) { this .createCombiner = createCombiner; this .mergeValue = mergeValue; } public void insert (K key, V value) { C combiner = combinerMap.get(key); if (combiner == null ) { combinerMap.put(key, createCombiner.call(value)); } else { combinerMap.put(key, mergeValue.call(combiner, value)); } if (combinerMap.size() > 1000 ) { spillToDisk(); } } private void spillToDisk () { writeToSpillFile(combinerMap); combinerMap.clear(); } }

效果对比:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 JavaPairRDD<String, Integer> withoutCombiner = words .mapToPair(word -> new Tuple2 <>(word, 1 )) .reduceByKey((a, b) -> a + b); JavaPairRDD<String, Integer> withCombiner = words .mapToPair(word -> new Tuple2 <>(word, 1 )) .combineByKey( value -> value, (acc, value) -> acc + value, (acc1, acc2) -> acc1 + acc2 );

手动实现map-side聚合:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 JavaPairRDD<String, Integer> manualCombiner = words.mapPartitionsToPair(iter -> { Map<String, Integer> localMap = new HashMap <>(); while (iter.hasNext()) { String word = iter.next(); localMap.merge(word, 1 , Integer::sum); } return localMap.entrySet().stream() .map(entry -> new Tuple2 <>(entry.getKey(), entry.getValue())) .iterator(); }).reduceByKey((a, b) -> a + b);

适用场景判断:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 public boolean shouldUseCombiner (JavaPairRDD<K, V> rdd) { long totalCount = rdd.count(); long distinctCount = rdd.keys().distinct().count(); double selectivity = (double ) distinctCount / totalCount; return selectivity < 0.5 ; } if (shouldUseCombiner(originalRDD)) { result = originalRDD.combineByKey(...); } else { result = originalRDD.reduceByKey((a, b) -> a + b); }

6.4.2 网络和内存优化 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 spark.conf().set("spark.network.timeout" , "800s" ); spark.conf().set("spark.executor.heartbeatInterval" , "60s" ); spark.conf().set("spark.io.compression.codec" , "snappy" ); spark.conf().set("spark.shuffle.file.buffer" , "32k" ); spark.conf().set("spark.shuffle.spill.compress" , "true" ); spark.conf().set("spark.memory.offHeap.enabled" , "true" ); spark.conf().set("spark.memory.offHeap.size" , "1g" ); spark.conf().set("spark.shuffle.spill" , "true" ); spark.conf().set("spark.shuffle.spill.compress" , "true" ); spark.conf().set("spark.shuffle.memoryFraction" , "0.2" );

6.5 动态分区裁剪(DPP) 动态分区裁剪(DPP)详解:

DPP是Spark3.x中的智能优化技术,能够在Join操作中动态地跳过不需要读取的分区,显著减少IO操作。

解决的问题:

不必要的数据读取 :传统方式会读取所有分区,即使某些分区在Join后会被过滤掉IO瓶颈 :大表的全表扫描成为性能瓶颈资源浪费 :CPU和内存被用于处理最终会被丢弃的数据

工作原理:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 public class DynamicPartitionPruning { public void demonstrateDPP () { Dataset<Row> salesFact = spark.read() .option("basePath" , "s3://data/sales_fact/" ) .parquet("s3://data/sales_fact/year=*/month=*/day=*" ); Dataset<Row> dateDim = spark.read() .parquet("s3://data/date_dim/" ) .filter(col("is_holiday" ).equalTo(true )) .select("date_key" ); spark.conf().set("spark.sql.optimizer.dynamicPartitionPruning.enabled" , "true" ); Dataset<Row> result = salesFact .join(dateDim, salesFact.col("date_key" ).equalTo(dateDim.col("date_key" ))) .select("sales_amount" , "product_id" ); } }

效果对比:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 public class DPPPerformanceTest { public void testDPPImpact () { spark.conf().set("spark.sql.optimizer.dynamicPartitionPruning.enabled" , "false" ); long startTime1 = System.currentTimeMillis(); executeJoinQuery(); long withoutDPP = System.currentTimeMillis() - startTime1; spark.conf().set("spark.sql.optimizer.dynamicPartitionPruning.enabled" , "true" ); long startTime2 = System.currentTimeMillis(); executeJoinQuery(); long withDPP = System.currentTimeMillis() - startTime2; System.out.println("数据读取量减少: " + (1000 - 10 ) / 1000.0 * 100 + "%" ); System.out.println("查询时间减少: " + (double )(withoutDPP - withDPP) / withoutDPP * 100 + "%" ); } }

DPP触发条件:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 public class DPPConditions { public boolean canUseDPP (Dataset<Row> largeTable, Dataset<Row> smallTable) { boolean isPartitioned = largeTable.isPartitioned(); boolean joinOnPartitionColumn = checkJoinCondition(); boolean smallTableIsSmall = estimateTableSize(smallTable) < 10_000_000 ; double pruningRatio = estimatePruningRatio(); boolean significantPruning = pruningRatio > 0.5 ; return isPartitioned && joinOnPartitionColumn && smallTableIsSmall && significantPruning; } }

DPP的内部实现:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 public class DPPExecutor { public Dataset<Row> executeWithDPP (Dataset<Row> factTable, Dataset<Row> dimTable, String joinColumn) { List<Object> filterValues = collectFilterValues(dimTable, joinColumn); PartitionFilter partitionFilter = createPartitionFilter(joinColumn, filterValues); Dataset<Row> prunedFactTable = factTable .filter(partitionFilter) .filter(col(joinColumn).isin(filterValues.toArray())); return prunedFactTable.join(dimTable, joinColumn); } private List<Object> collectFilterValues (Dataset<Row> dimTable, String column) { return dimTable.select(column) .distinct() .collectAsList() .stream() .map(row -> row.get(0 )) .collect(Collectors.toList()); } }

实际应用场景:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 Dataset<Row> orders = spark.read().parquet("orders/year=*/month=*/" ) .alias("orders" ); Dataset<Row> customers = spark.read().parquet("customers/" ) .filter(col("region" ).equalTo("北京" )) .alias("customers" ); Dataset<Row> beijingOrders = orders.join(customers, orders.col("customer_id" ).equalTo(customers.col("customer_id" ))); Dataset<Row> transactions = spark.read().parquet("transactions/date=*/" ) .alias("trans" ); Dataset<Row> holidayDates = spark.read().parquet("holidays/" ) .filter(col("year" ).equalTo(2024 )) .alias("holidays" ); Dataset<Row> holidayTransactions = transactions.join(holidayDates, transactions.col("date" ).equalTo(holidayDates.col("date" ))); Dataset<Row> salesData = spark.read() .parquet("sales/country=*/state=*/city=*/" ) .alias("sales" ); Dataset<Row> targetCities = spark.read().parquet("target_cities/" ) .filter(col("campaign_active" ).equalTo(true )) .alias("cities" ); Dataset<Row> campaignSales = salesData.join(targetCities, expr("sales.country = cities.country AND " + "sales.state = cities.state AND " + "sales.city = cities.city" ));

DPP配置优化:

1 2 3 4 5 6 7 8 9 10 11 spark.conf().set("spark.sql.optimizer.dynamicPartitionPruning.enabled" , "true" ); spark.conf().set("spark.sql.optimizer.dynamicPartitionPruning.useStats" , "true" ); spark.conf().set("spark.sql.optimizer.dynamicPartitionPruning.fallbackFilterRatio" , "0.5" ); spark.conf().set("spark.sql.optimizer.dynamicPartitionPruning.reuseBroadcastOnly" , "true" ); spark.conf().set("spark.sql.adaptive.logLevel" , "INFO" );

DPP最佳实践:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 factTable.join(dimTable, factTable.col("partition_col" ).equalTo(dimTable.col("join_col" ))) factTable.join(dimTable, factTable.col("other_col" ).equalTo(dimTable.col("join_col" ))) Dataset<Row> filteredDim = dimTable.filter(col("active" ).equalTo(true )); factTable.join(filteredDim, "key" ) factTable.join(dimTable, "key" ).filter(col("active" ).equalTo(true ))

6.6 自定义分区器:解决数据倾斜的利器 6.6.1 分区器类型对比 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 public class HashPartitioner extends Partitioner { private final int numPartitions; @Override public int getPartition (Object key) { return Math.abs(key.hashCode()) % numPartitions; } } public class RangePartitioner <K, V> extends Partitioner { private Object[] rangeBounds; @Override public int getPartition (Object key) { return binarySearch(rangeBounds, key); } } public class BusinessPartitioner extends Partitioner { @Override public int getPartition (Object key) { if (key instanceof String) { String strKey = (String) key; if (strKey.startsWith("VIP_" )) { return Math.abs(strKey.hashCode()) % 5 ; } else { return 5 + (Math.abs(strKey.hashCode()) % (numPartitions - 5 )); } } return Math.abs(key.hashCode()) % numPartitions; } }

6.6.2 实际应用场景 场景1:处理热点数据

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 public class ProductPartitioner extends Partitioner { private Set<String> hotProducts; private int numPartitions; public ProductPartitioner (Set<String> hotProducts, int numPartitions) { this .hotProducts = hotProducts; this .numPartitions = numPartitions; } @Override public int getPartition (Object key) { String productId = (String) key; if (hotProducts.contains(productId)) { String saltedKey = productId + "_" + (Math.abs(productId.hashCode()) % 10 ); return Math.abs(saltedKey.hashCode()) % numPartitions; } else { return Math.abs(productId.hashCode()) % numPartitions; } } } Set<String> hotProducts = Set.of("product_001" , "product_002" , "product_003" ); ProductPartitioner partitioner = new ProductPartitioner (hotProducts, 100 );JavaPairRDD<String, Order> orders = ordersRDD.mapToPair(order -> new Tuple2 <>(order.getProductId(), order)); JavaPairRDD<String, Order> partitionedOrders = orders.partitionBy(partitioner);

场景2:地理位置分区

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 public class GeographicPartitioner extends Partitioner { private Map<String, Integer> regionToPartition; private int numPartitions; public GeographicPartitioner (int numPartitions) { this .numPartitions = numPartitions; this .regionToPartition = initializeRegionMapping(); } private Map<String, Integer> initializeRegionMapping () { Map<String, Integer> mapping = new HashMap <>(); mapping.put("北京" , 0 ); mapping.put("上海" , 1 ); mapping.put("深圳" , 2 ); mapping.put("广州" , 3 ); return mapping; } @Override public int getPartition (Object key) { String location = extractLocation((String) key); Integer partition = regionToPartition.get(location); if (partition != null ) { return partition; } else { return 4 + (Math.abs(key.hashCode()) % (numPartitions - 4 )); } } }

场景3:时间序列分区

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 public class TimeBasedPartitioner extends Partitioner { private long timeWindowMillis; private int numPartitions; public TimeBasedPartitioner (long timeWindowMillis, int numPartitions) { this .timeWindowMillis = timeWindowMillis; this .numPartitions = numPartitions; } @Override public int getPartition (Object key) { if (key instanceof Long) { long timestamp = (Long) key; long timeWindow = timestamp / timeWindowMillis; return (int ) (timeWindow % numPartitions); } return Math.abs(key.hashCode()) % numPartitions; } } TimeBasedPartitioner hourlyPartitioner = new TimeBasedPartitioner ( 3600000L , 24 ); JavaPairRDD<Long, LogEntry> hourlyLogs = logsRDD .mapToPair(log -> new Tuple2 <>(log.getTimestamp(), log)) .partitionBy(hourlyPartitioner);

6.6.3 性能优化策略 1. 分区数量选择

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 public class PartitionCalculator { public static int calculateOptimalPartitions (JavaSparkContext sc, long dataSize) { long targetPartitionSize = 128 * 1024 * 1024 ; int dataSizeBasedPartitions = (int ) (dataSize / targetPartitionSize); int totalCores = sc.defaultParallelism(); int coreBasedPartitions = totalCores * 3 ; int minPartitions = totalCores; return Math.max(minPartitions, Math.min(dataSizeBasedPartitions, coreBasedPartitions)); } }

2. 分区器性能测试

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 public class PartitionerEvaluator { public void evaluatePartitioner (JavaPairRDD<String, ?> rdd, Partitioner partitioner) { JavaPairRDD<String, ?> partitionedRDD = rdd.partitionBy(partitioner); Map<Integer, Long> partitionSizes = partitionedRDD .mapPartitionsWithIndex((index, iter) -> { long count = 0 ; while (iter.hasNext()) { iter.next(); count++; } return Collections.singletonList(new Tuple2 <>(index, count)).iterator(); }, false ) .collectAsMap(); double avgSize = partitionSizes.values().stream() .mapToLong(Long::longValue) .average() .orElse(0.0 ); double maxSize = partitionSizes.values().stream() .mapToLong(Long::longValue) .max() .orElse(0L ); double skewness = maxSize / avgSize; System.out.println("平均分区大小: " + avgSize); System.out.println("最大分区大小: " + maxSize); System.out.println("数据倾斜度: " + skewness); if (skewness > 2.0 ) { System.out.println("警告:存在严重数据倾斜!" ); } } }

3. 动态分区策略

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 public class AdaptivePartitioner { public static Partitioner choosePartitioner (JavaPairRDD<String, ?> rdd, int numPartitions) { List<String> sample = rdd.keys().sample(false , 0.01 ).collect(); Map<String, Long> keyFrequency = sample.stream() .collect(Collectors.groupingBy( key -> key, Collectors.counting() )); long avgFrequency = sample.size() / keyFrequency.size(); Set<String> hotKeys = keyFrequency.entrySet().stream() .filter(entry -> entry.getValue() > avgFrequency * 10 ) .map(Map.Entry::getKey) .collect(Collectors.toSet()); if (!hotKeys.isEmpty()) { return new HotKeyPartitioner (hotKeys, numPartitions); } else { return new HashPartitioner (numPartitions); } } }

6.7 数据倾斜处理策略 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 JavaPairRDD<String, Integer> skewedRDD = rdd.mapPartitionsToPair(iter -> { Map<String, Integer> localMap = new HashMap <>(); while (iter.hasNext()) { Tuple2<String, Integer> tuple = iter.next(); localMap.merge(tuple._1, tuple._2, Integer::sum); } return localMap.entrySet().stream() .map(entry -> new Tuple2 <>(entry.getKey(), entry.getValue())) .iterator(); }); JavaPairRDD<String, Integer> twoPhaseAgg = rdd .mapToPair(tuple -> new Tuple2 <>(tuple._1 + "_" + (Math.abs(tuple._1.hashCode()) % 10 ), tuple._2)) .reduceByKey((a, b) -> a + b) .mapToPair(tuple -> new Tuple2 <>(tuple._1.split("_" )[0 ], tuple._2)) .reduceByKey((a, b) -> a + b);

自定义分区器处理倾斜:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 public class SkewPartitioner extends Partitioner { private final int numPartitions; private final String skewedKey; public SkewPartitioner (int numPartitions, String skewedKey) { this .numPartitions = numPartitions; this .skewedKey = skewedKey; } @Override public int numPartitions () { return numPartitions; } @Override public int getPartition (Object key) { if (skewedKey.equals(key)) { return Math.abs(key.hashCode()) % numPartitions; } return Math.abs(key.hashCode()) % numPartitions; } }

自定义分区器详解:

自定义分区器是Spark中解决数据分布不均问题的重要工具,通过控制数据的分区逻辑来优化性能。

解决的问题:

数据倾斜 :某些key的数据量远大于其他key,导致个别分区过大热点问题 :高频访问的数据集中在少数分区,造成负载不均默认分区器局限性 :HashPartitioner无法处理特殊的数据分布模式

分区器类型对比:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 public class HashPartitioner extends Partitioner { private final int numPartitions; @Override public int getPartition (Object key) { return Math.abs(key.hashCode()) % numPartitions; } } public class RangePartitioner <K, V> extends Partitioner { private Object[] rangeBounds; @Override public int getPartition (Object key) { return binarySearch(rangeBounds, key); } } public class BusinessPartitioner extends Partitioner { @Override public int getPartition (Object key) { if (key instanceof String) { String strKey = (String) key; if (strKey.startsWith("VIP_" )) { return Math.abs(strKey.hashCode()) % 5 ; } else { return 5 + (Math.abs(strKey.hashCode()) % (numPartitions - 5 )); } } return Math.abs(key.hashCode()) % numPartitions; } }

实际应用场景:

场景1:处理热点数据